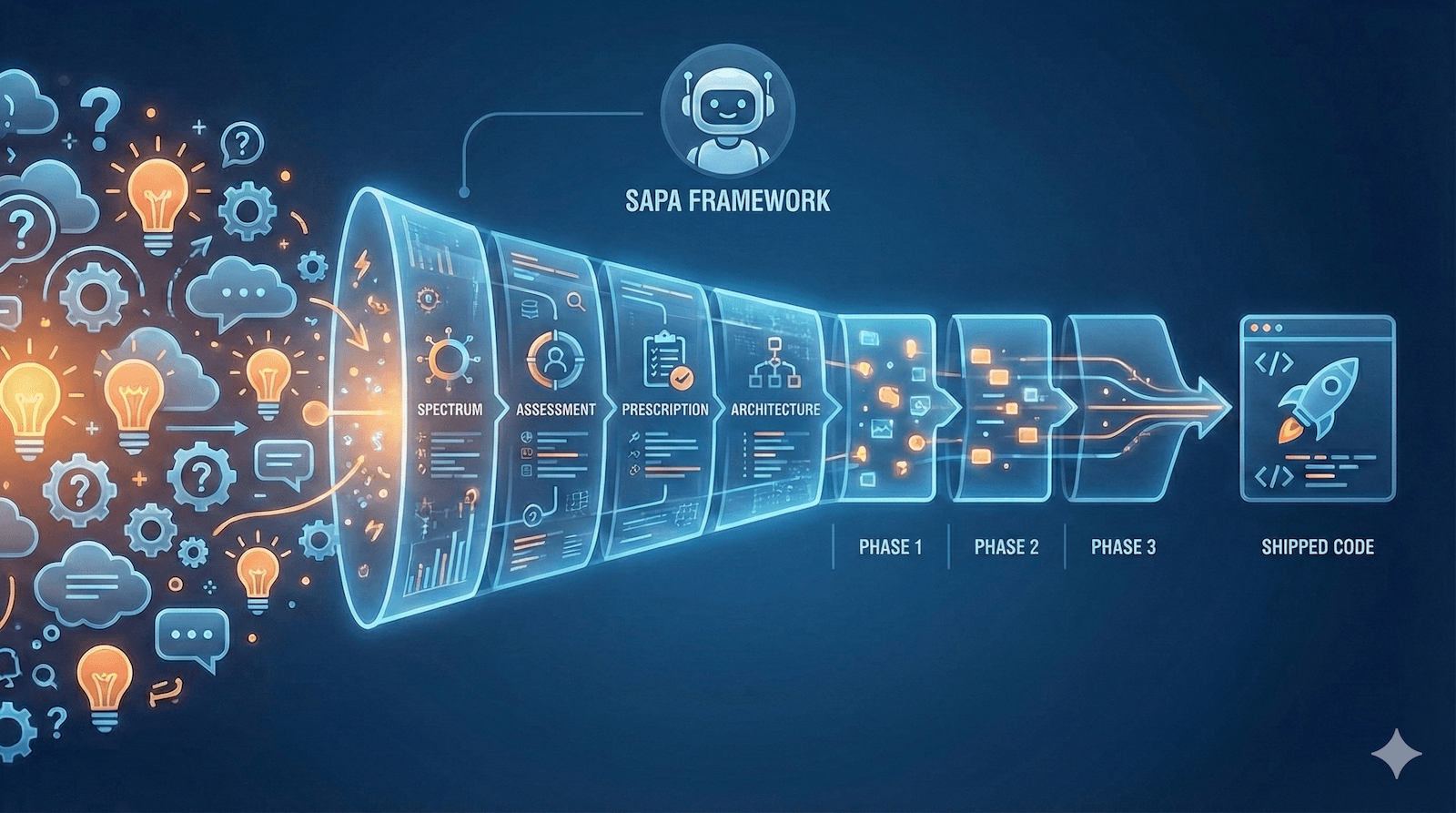

SAPA Executor: From Phase Briefs to Shipped Code

A few days ago I wrote about SAPA, which is basically a way to figure out how much planning you actually need before you start building something. The idea was to stop over-documenting throwaway projects and under-documenting the ones that matter.

It worked pretty well for that. But I ran into a problem I didn't really anticipate.

The Gap I Didn't Think About

SAPA gets you to what I called Phase Briefs. They look something like this:

# Phase Brief: Core Module

## Context

Greenfield - first phase after project setup

## Objective

Implement the primary data processing pipeline

## Deliverables

- [ ] pipeline.rs module

- [ ] CLI commands for processing

- [ ] Configuration loader

## Acceptance Criteria

- Processes input files correctly

- Handles errors gracefully

- Works on Windows and macOS

And that's... fine? Like, a human reading that knows what to do. But I wasn't handing it to a human. I was handing it to Claude Code.

What I kept running into was Claude Code asking me clarifying questions. "What's the file structure look like?" "Which error handling crate should I use?" "Where does the config file go?"

All reasonable questions. But I was spending the first 20 minutes of every session just re-explaining context that probably should have been written down somewhere.

The brief told Claude Code what to build. It didn't tell it how.

What I Started Doing Instead

So I started writing longer specs. Instead of bullet points, I'd include actual file paths, code scaffolds, validation steps, that kind of thing. More like a technical design doc than a brief.

The sessions got way more productive. Claude Code would just... go. For hours sometimes, without stopping to ask me things it should already know.

Eventually I realized I was doing the same expansion every time. Brief comes in, I flesh it out, Claude Code executes it. Same pattern over and over.

So I turned it into a skill.

What the Skill Does

I'm calling it SAPA Executor. It sits on top of the original SAPA framework and basically automates that expansion step.

The workflow ends up being:

Plan in Claude Web using SAPA, which gives you a

PHASE_PLAN.mdCopy that file to your project

Tell Claude Code to generate the phases

Execute them one at a time

The skill handles three things that SAPA didn't:

Expansion is the main one. It takes those short briefs and turns them into detailed specs with file paths, scaffolds, implementation notes, test scenarios.

Tracking was something I added after losing my place a few times. There's a PHASE_INDEX.md that keeps track of which phases are done, which session UUIDs go with which phase, and any notes or discoveries along the way.

Validation is just making sure each phase actually works before moving on. Checklists, basically.

What the Format Looks Like

Here's a simplified version of what comes out of SAPA:

# Phase Plan: [Project Name]

## Overview

**Project**: [Name]

**Type**: [CLI / Desktop App / Library / etc.]

**Stack**: [Key technologies]

## Architecture Overview

[Diagram or description]

## Phases

### Phase 1: Project Setup

**Objective**: Initialize project with proper structure

**Deliverables**: Config files, module stubs, build scripts

**Acceptance**: Project compiles and runs placeholder

### Phase 2: Core Module

**Objective**: Implement primary functionality

**Deliverables**: Main module, types, error handling

**Acceptance**: Core feature works end-to-end

And here's what the skill expands Phase 2 into:

# Phase 02: Core Module

> **Status**: ⬜ Not Started

> **Estimated effort**: 2-3 hours

> **Dependencies**: Phase 01 (must be complete)

## Context

### What Exists

- Project scaffold from Phase 01

- Configuration loader

- Basic CLI structure

### What We're Building

The primary data processing pipeline.

## Deliverables

### 1. Pipeline Module

**File**: `src/pipeline.rs`

**Purpose**: Handles the core processing logic

**Implementation**:

- Create `Pipeline` struct with configuration

- Implement `process()` method

- Add error types in `src/errors.rs`

**Scaffold**:

```rust

pub struct Pipeline {

config: Config,

}

impl Pipeline {

pub fn new(config: Config) -> Self { ... }

pub fn process(&self, input: &Path) -> Result<Output> { ... }

}

```

**Validation**:

- [ ] Compiles without warnings

- [ ] Unit tests pass

### 2. CLI Commands

**File**: `src/cli.rs`

**Implementation**:

- Add `process` subcommand

- Wire to Pipeline module

- Handle errors with user-friendly messages

## Validation Checklist

Before marking this phase complete:

- [ ] All deliverables implemented

- [ ] Code compiles without warnings

- [ ] `cargo test` passes

- [ ] Manual test: process sample input successfully

- [ ] Works on target platform(s)

## Test Scenarios

### Scenario 1: Happy Path

1. Run `app process input.txt`

2. **Expected**: Output file created, no errors

### Scenario 2: Missing Input

1. Run `app process nonexistent.txt`

2. **Expected**: Clear error message, exit code 1

## Handoff to Next Phase

Phase 3 will add [next feature]. It assumes:

- Pipeline module is stable

- Error handling patterns are established

- CLI structure supports additional commands

It's kind of verbose, but that's the point. Claude Code doesn't have to guess anything. It knows what files to create, what they should roughly look like, how to test them, and what the next phase expects.

The Tracking Thing

One problem I kept running into: phases don't always fit in one session.

I'd get halfway through something, close my laptop, come back the next day, and have no idea where I left off. Did I finish the error handling? Did I test on Windows yet? What was that weird edge case I found?

So the skill maintains an index file:

# Phase Index: [Project Name]

## Progress

| Phase | Name | Status | Session UUID | Notes |

|-------|------|--------|--------------|-------|

| 01 | Project Setup | ✅ Complete | 7f3a8b2c-... | |

| 02 | Core Module | ✅ Complete | 9d2e1f4a-... | Added retry logic |

| 03 | Extensions | 🟡 In Progress | a1b2c3d4-... | Windows path handling needed |

| 04 | Polish | ⬜ Not Started | | |

When I start a new session, I just point Claude Code at the index and it picks up where we left off. The notes column is for stuff I discovered during execution that might matter later. Things that didn't fit the original plan but need to be remembered.

How It Feels to Use

The skill responds to natural language. The main things I end up saying:

"generate phases from the phase plan"

"start phase 2"

"complete phase 2" (runs through the checklist)

"phase status"

It's not really a CLI. More like a conversation where Claude Code knows what I mean by "start phase 2" because it has the context.

What I Took Away From This

The thing I keep learning with AI tooling is that the information you need is different from the information the AI needs.

A Phase Brief makes sense to me because I can fill in the gaps. I know what "error handling" means in the context of this project. Claude Code doesn't, not unless I tell it.

SAPA was about figuring out how much planning you need. This is about figuring out how much detail Claude Code needs to execute without hand-holding.

They're kind of the same lesson applied at different points. Right-sizing the documentation for who's actually going to use it.

Links

I added the skill to the original SAPA gist:

sapa-framework.md- the planning methodology (unchanged)sapa-quick-invoke.md- quick invoke for Claude Web (unchanged)sapa-executor-skill.md- the execution skill for Claude CodePHASE_INDEX_TEMPLATE.md- the progress tracking template

To install it:

mkdir -p ~/.claude/skills/sapa-executor

# Copy sapa-executor-skill.md to ~/.claude/skills/sapa-executor/SKILL.md

Or you can just drop the skill file into your project's .claude/skills/ directory if you want it scoped to one project.

If you've been using SAPA and running into the same handoff friction I was, maybe give this a shot. That's what I built it for.